Reflection

What it actually takes to get AI right

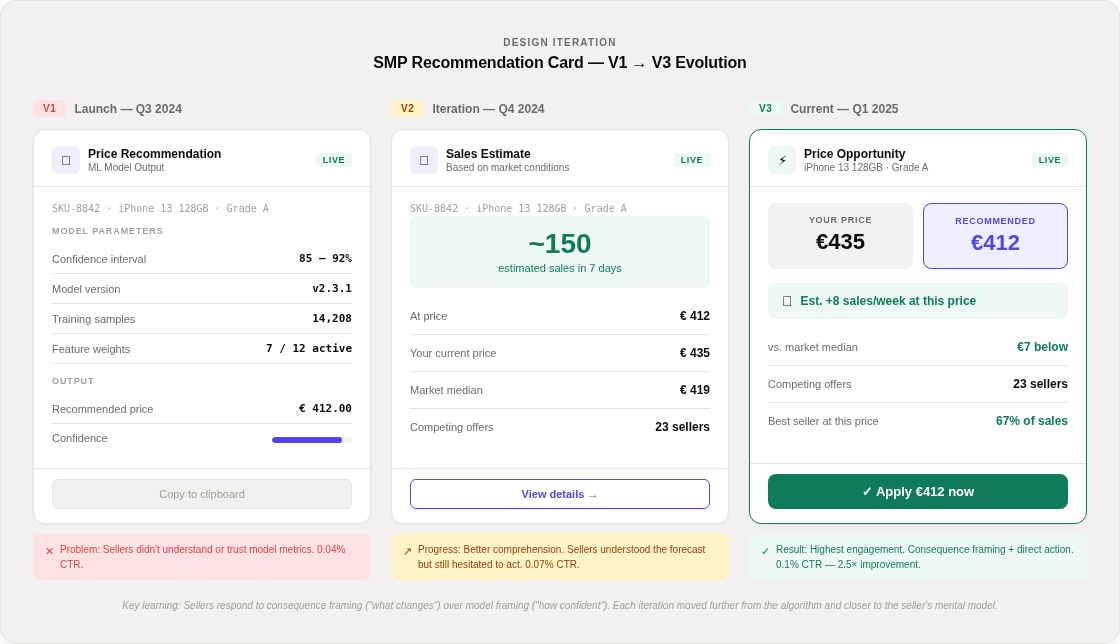

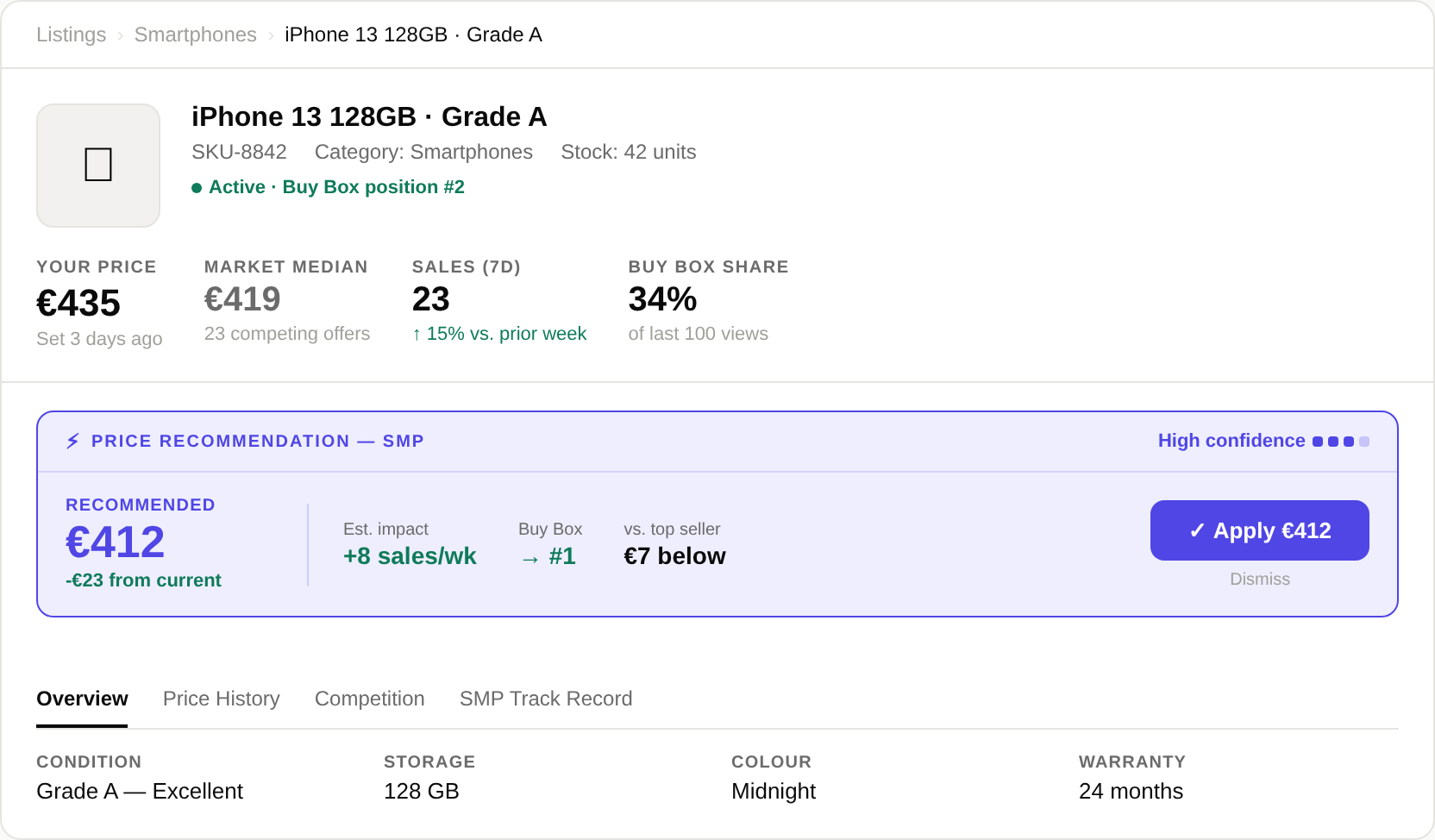

Every AI initiative in 2024 had pressure behind it — pressure to ship features with "AI" in the name, show progress to the board, keep pace with competitors. We pushed back on that. The question was never how do we add AI to the Back Office — it was where does AI actually make a seller's job easier, and how do we earn the right to put it there? That framing shaped every decision in this programme. The data below shows where it worked and where we still fell short.

67%

of SMP price recommendation clicks come from sellers already winning their category

The feature was designed to help losing sellers. It's being used predominantly by winning sellers — as a confirmation signal, or a tool to identify when they can go further.

915

CSV data downloads vs 0.2% in-product action rate on the same data

Sellers took the data and modelled decisions externally rather than trusting the in-product action. "I want your data. I'll do my own analysis."

€15

Average gap between AI-recommended price and what sellers actually set

This is the 2026 North Star metric — the gap we're designing to close. Success isn't measured by how many sellers click a recommendation, but whether their actual prices move toward the AI's suggestion.

The gap we're designing to close

€X+15

What sellers actually set

Success isn't measured by clicks on a recommendation — it's whether actual prices move toward the AI's suggestion.

The consistent finding: sellers express a coherent form of partial trust — they'll take the data, but not the recommendation. They're not disengaged; they're careful. The programme's job isn't to replace seller judgment — it's to give sellers the information to exercise it more confidently.

How sellers actually use AI recommendations

100%

Sellers receive

SMP recommendation

→

67%

of clicks from sellers

already winning

→

915

CSV downloads

in 3 months

→

0.2%

in-product

action rate

Sellers extract the intelligence, not the recommendation.

"I want your data. I'll do my own analysis."

These findings pointed to a single conclusion: sellers didn't reject AI — they rejected AI that hadn't earned their trust yet. The order we introduced features mattered as much as the features themselves.

What we'd do differently

The accountability we wanted required getting the order right. Each feature either builds credibility or creates suspicion that every subsequent feature inherits. Shipping AI that works isn't a technology problem — it's a planning problem.

Ideal sequence

1Surface data (2–3 mo)

2Recommend (3–6 mo)

3Automate with consent

4Catalogue intelligence

What actually happened

1SMP launched (limited data phase)

!DPU launched at Level 4 — no consent

3BackPricer succeeded (seller-configured)

4Pricing Opportunities (inherited DPU scepticism)

If we were starting again — with the luxury of sequencing the programme around trust instead of around shipping deadlines — we'd move through four stages:

-

1

Stage 1: Surface data, make no recommendation

Before any AI recommendation, give sellers the raw data Back Market has that they don't: competitor pricing on their exact products, sales velocity rank, demand trends. No suggestion about what to do with it. Just visibility they've never had before. This proves the data is accurate before you attach a recommendation to it. Minimum duration: 2–3 months.

Automation Spectrum: Level 1

-

2

Stage 2: Single-product recommendation (SMP)

A price recommendation on one product at a time. Recommendation only — seller always decides. Framed around an outcome forecast: suggested price + estimated units sold in 7 days. This is the first moment sellers are asked to consider an AI recommendation. It needs to be right more often than it's wrong, for long enough to build the accuracy track record that 41% of sellers require. Minimum duration: 3–6 months before BackPricer.

Automation Spectrum: Level 2

-

3

Stage 3: Automation with consent (BackPricer)

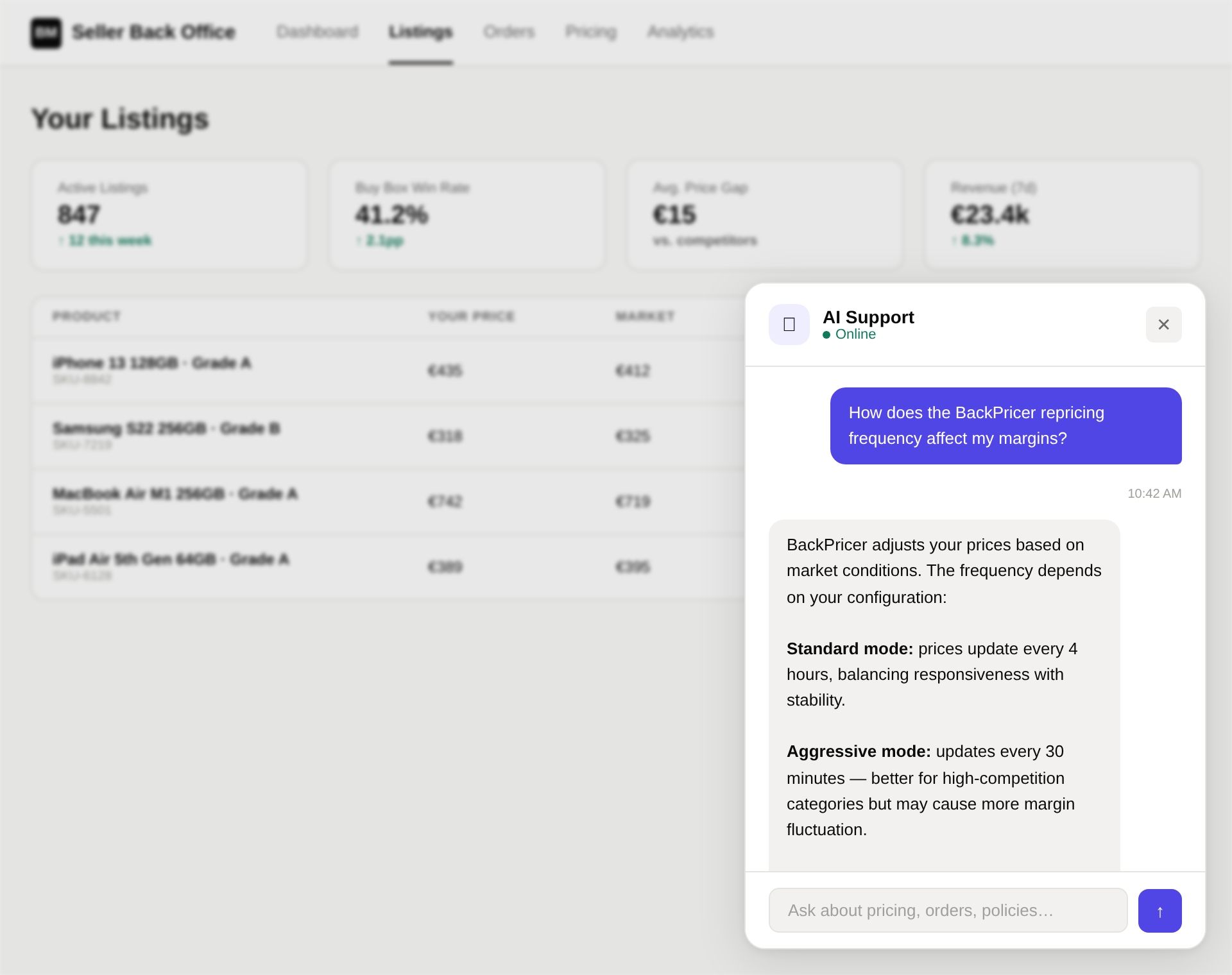

Sellers configure price bounds; the system reprices continuously within those bounds. Sellers have already experienced the pricing model as a recommendation — they've seen it work, they understand how it thinks. BackPricer asks them to extend that trust into automation, with the safeguard that the system can never act outside their defined parameters.

Automation Spectrum: Level 3

-

4

Stage 4: Catalogue-level intelligence (Pricing Opportunities)

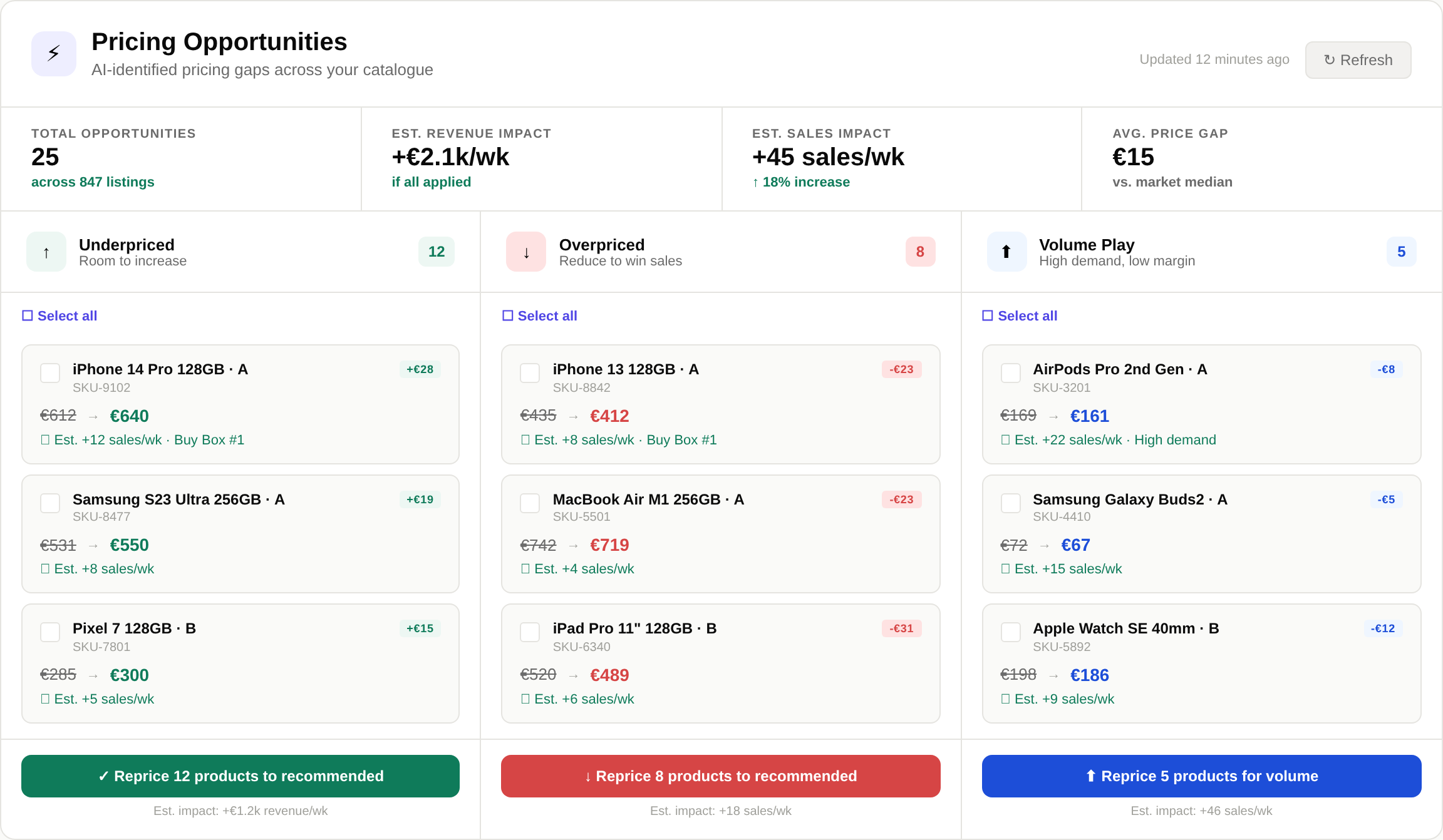

AI-surfaced actions across the seller's entire catalogue — Quick Wins, Listings in Trouble, BackBoxes to Boost — with bulk action controls. This requires sellers to trust the intelligence across their entire catalogue simultaneously. That trust is only possible after 6–12 months of experience with single-product recommendations and automated repricing.

Automation Spectrum: Levels 2–3

Trust builds sequentially — each stage earns the right to the next

1

Surface data

2–3 months

Prove data

accuracy

→

Build accuracy

track record

→

→

4

Catalogue AI

6–12 months

Full catalogue

intelligence

Based on seller research: 51.8% require control to accept/reject · 47.3% require transparent explanation · 41.1% require proven accuracy over time

The actual programme deviated under shipping pressure. BackPricer launched with a limited SMP track record. Dynamic Pricing Up went to Level 4 before the programme had earned it — exactly the kind of "ship AI because we can" decision we'd set out to avoid. The trust damage cast doubt on every subsequent AI feature.

Where this goes next

This is Phase 1 of a multi-year programme. The roadmap operates across three horizons:

-

Near-term — closing the €15 gap

The €15 gap between AI-recommended and actual seller prices is the 2026 North Star metric. Focus: improving recommendation legibility, surfacing accuracy track records, and evolving the chat assistant from Q&A to task execution.

-

Medium-term — system-level intelligence

Moving from isolated features to a coherent AI layer: proactive anomaly detection on the dashboard, AI-assisted pricing at listing creation, and demand forecasting in inventory management.

-

Long-term — the transferable framework

The Automation Spectrum, trust requirements, and sequencing model aren't Back Market-specific. They apply to any professional platform where users have structural reasons to be cautious about automation.

Programme roadmap

2026 H1

Closing the €15 gap

Q1: Pause FR/ES Deals for DPU analysis

Q1: SMP copy + trust improvements

Q1–Q2: ML model for Dynamic Adjustment Fee

Q2: Deals relaunch with DPU learnings

2026 H2

System-level intelligence

Q3: Dynamic Pricing Down (BM-financed)

Dashboard anomaly detection

AI-assisted pricing at listing creation

Demand forecasting in inventory

2027+

Ambient AI

Pricing Agent (AI sets prices in seller bounds)

Catalog Agent (AI for listing creation)

Support chatbot → task execution

Transferable framework beyond BM

The lesson: AI is powerful — but only when the planning behind it is as rigorous as the technology itself. You can't design your way to trust with a user base that has structural reasons to be cautious, and you can't ship your way there by adding more features. What earns trust is a sequence of AI tools that did what they said, over months, across thousands of sellers — not because AI was the trend, but because each tool made a seller's job measurably easier. The design job is to structure that sequence accountably.